Thanks for the feedback all. When we as consumers are making product purchasing decisions, there are a variety of sources we can utilise to inform our decisions. Research indicates that word of mouth remains one of the most influential factors, and we all also typically use a combination of user reviews and expert reviews. In my opinion, it is worthwhile using a variety of sources. Probably the biggest strength of a user review is chancing upon a reviewer who is in a similar user case position, who can offer some personally relevant insight (if you can trust it is a genuine perspective).

There is a good reason for the potential disparity between user reviews and expert reviews, and respectfully, I’m not sure that there is a need to reconcile these differences directly from CHOICE’s perspective, although we do intend to prioritise what members have to say and to continue improving and developing avenues for people to provide input. However, we can say with certainty that the system of mass consumer reviews alone also has some significant downfalls we should take care with. For example, we as consumers are particularly swayed by price, branding and marketing. It is often the case that premium brands are perceived to perform better or be more durable, but our testing often reveals this perception is not based on facts.

A regular consumer rarely has the opportunity to thoroughly test a range of products in a controlled environment. There is often an emotional element to consumer reviews, it can be connected to other unrelated events occurring at the time or to personal trust/value judgments happening through the recommendation or purchase process. Was the review item in question a gift from family or a dear friend? Were there price or salesperson influences at play? These things will influence the perception of the average genuine reviewer. I don’t think I need to cover the issue of fake or purchased reviews, it’s estimated that 30-40% of reviews on a given site are potentially fake or influenced.

There is a host of research out there disconnected from us that will confirm the message I’m relaying, take this study from the University of Colorado for example.

Addressing the durability point, there are some ongoing lab tests in place at CHOICE. We mainly undertake mass surveying of consumer experience, the same people over time, to address durability and quality issues in a real world setting - it can change our recommendations or alter our tests. Our lab experts also have a deep understanding of manufacturing quality, as we have the opportunity to undertake types of manufacturing testing or access manufacturers from an independent position that isn’t accessible to most reviewers, professional or otherwise.

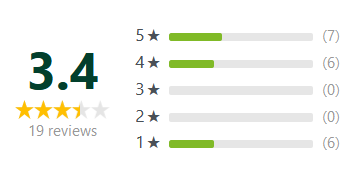

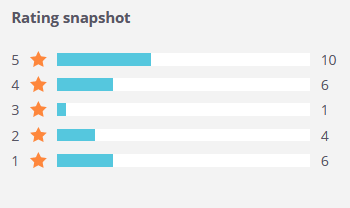

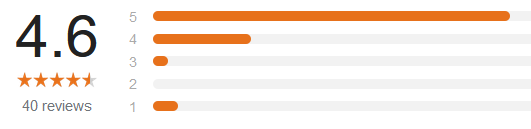

That aside, I’m not convinced that most user recommendations address the issue of manufacturing quality, long term usability or durability anyway. Most reviews are prompted to be made soon after purchase for a start. In mass markets, we also have a factor where there are always going to be some issues experienced for whatever reason by a smaller percentage of people that doesn’t represent the whole. Sometimes this will be a manufacturing fault or issue that has drawn several hundreds to remark when the real majority is in the hundreds of thousands. Other issues such as how the product is used or factors out of control (power surges or brown outs for example) can skew user review information.

The problems raised above can occur on a large scale, which can create a false trend even across different platforms. The impact of branding means that you can have a poor product and an excellent product within the same category, even the same product range. What indicators are provided from users are unlikely to be precise in the ability to identify these issues and more, except perhaps in the most acute cases (where explosions or safety issues are occurring).

As mentioned, for these reasons, I can’t see that CHOICE would seek to reassess our recommendations based on differences between our results and mass user reviews. However, that doesn’t mean that we are not evolving, we are listening to what people are saying on review or social platforms so that we can improve our testing and reviews. Here’s some examples of things being continually improved or currently prioritised for improvement:

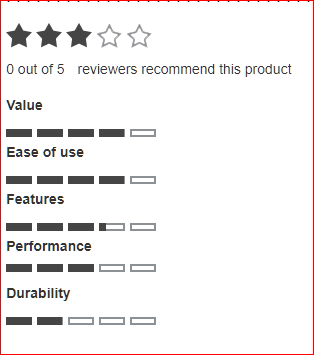

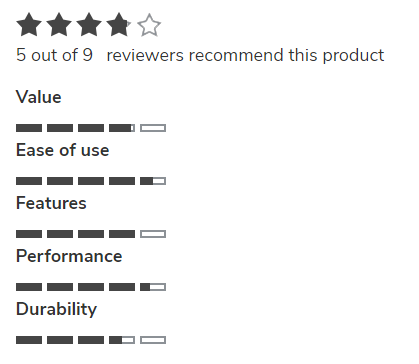

- The way we display user reviews on our site is currently being addressed

- The testing process in general can be tweaked as new needs or information is provided, this is balanced with the need to provide consistency and clarity in our advice

- The way we explain the difference between a popular trend and an expert opinion can be brought forward or explored in more depth

- We will continue to undertake extensive and individual-level communications on real world experience to develop statistically significant and sound trustworthy advice

- We also seek to broaden our discussions with as many Australians as possible on a range of different platforms, and to enable different information sources alongside expert opinion

- We accept that we can’t resolve every personal need at an individual level on a mass scale, but we are personalising tools and reviews as much as possible. One example is with our health insurance review, which has gone from a static recommendation to an interactive one

Please keep adding your thoughts, we will consider them all carefully and make them available to different departments in the organisation.